Take a closer look at

The Operational Data Warehouse.

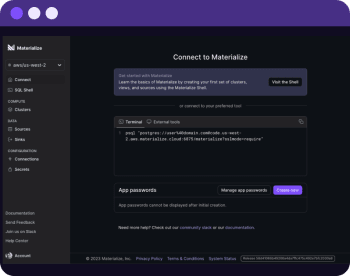

Deliver up-to-the-second results with Materialize

Trusted by teams that deliver fresh, correct results.

Built to be your Trusted Operational Platform.

Delivering operational, up-to-the-second data is a challenge. Data warehouses are built to process in batches, adding complexity and cost as you move closer to real-time. Microservices are expensive and inconsistent. Stream processors are hard to manage and implement. Materialize is the only solution that packages the usability of your data warehouse with the power of streaming.

Freshness

Sub-second updates on results as data arrives.

Consistency

Strong consistency, not eventual.

Responsiveness

Consume results with the interactivity of a SQL database.

Materialize

Continuous computation for fresh results with strong consistency, packaged in a responsive SQL DB abstraction.

Analytical Data Warehouse

Built for infrequent batch updates, streaming features are expensive add-ons with inadequate performance.

Microservices + Caches

Strong consistency is impossible in bespoke services, no economies of scale, high maintenance burden.

Stream Processors

Low-level tools that require expensive new skillsets, disruptive architectures and workflows.

How Materialize Works

Materialize takes the familiar data warehouse architecture and makes key changes to create a uniquely powerful operational platform.

1. Start with a Cloud Data Warehouse Architecture

Storage-Compute separation delivers workload isolation and unlimited scale.

2. Add Real-Time Data Sources

Materialize has several streaming input sources that continuously pull in data from upstream OLTP Databases, Message Brokers, and other upstream systems.

3. Transform continuously with a Streaming Engine

Instead of waiting for queries and running one-shot batch transformations, data is transformed incrementally in the compute layer.

4. Add Streaming Output Options

In addition to serving standard SQL queries with high concurrency and low latency, users can subscribe to updates from a query or sink updates out for event-driven architectures.

A better way for fast-changing data

Data is queried in SQL and updated as changes happen in subsecond latency.

Managed in standard SQL

Incrementally Maintained Views

Write complex SQL transformations as materialized views that efficiently update themselves as inputs change.

Learn MoreSliding Windows

Write queries that filter to a window of time anchored to the present, Materialize will update results as time advances.

Learn MoreSQL Alerting

Write alerts as SQL queries with filters and subscribe to new rows as they appear.

Learn MoreCREATE MATERIALIZED VIEW my_view AS

SELECT userid, COUNT(api.id), COUNT(pageviews.id)

FROM users

JOIN pageviews on users.id = pageviews.userid

JOIN api ON users.id = api.userId

GROUP BY userid;| userID | api_calls | pageviews |

|---|---|---|

| VPLaKV | 400 | 20 |

| MN37Mt | 60 | 9 |

| 1fT4KY | 72 | 42 |

| sT4QY | 10 | 342 |

Incrementally Maintained Views

Write complex SQL transformations as materialized views that efficiently update themselves as inputs change.

Learn MoreCREATE MATERIALIZED VIEW my_view AS

SELECT userid, COUNT(api.id), COUNT(pageviews.id)

FROM users

JOIN pageviews on users.id = pageviews.userid

JOIN api ON users.id = api.userId

GROUP BY userid;| userID | api_calls | pageviews |

|---|---|---|

| VPLaKV | 400 | 20 |

| MN37Mt | 60 | 9 |

| 1fT4KY | 72 | 42 |

| sT4QY | 10 | 342 |

Sliding Windows

Write queries that filter to a window of time anchored to the present, Materialize will update results as time advances.

Learn MoreCREATE MATERIALIZED VIEW my_window AS

SELECT date_trunc('minute', received_at),

COUNT(*) as order_ct, SUM(amount) as revenue

FROM orders

WHERE mz_now() < received_at + interval '5 minutes'

GROUP BY 1;| minute | order_ct | revenue |

|---|

SQL Alerting

Write alerts as SQL queries with filters and subscribe to new rows as they appear.

Learn MoreSELECT userID, email, MAX(orders.id) as last_order

FROM users

JOIN orders ON orders.userID = users.id

GROUP BY userId, email

-- Use a filter to surface users with a high % of fraud

HAVING SUM(is_fraud) / COUNT(orders.id)::FLOAT > 0.5;| userID | last_order | |

|---|---|---|

| REOtIb | a@gmail.com | 13/12/2022 |

| Y5KBE8 | b@yahoo.com | 9/12/2022 |

| Wj7JQ0 | c@hotmail.com | 13/12/2022 |

| tPCQ0 | d@xyz.com | 13/11/2022 |

Start taking action on up-to-the-second data

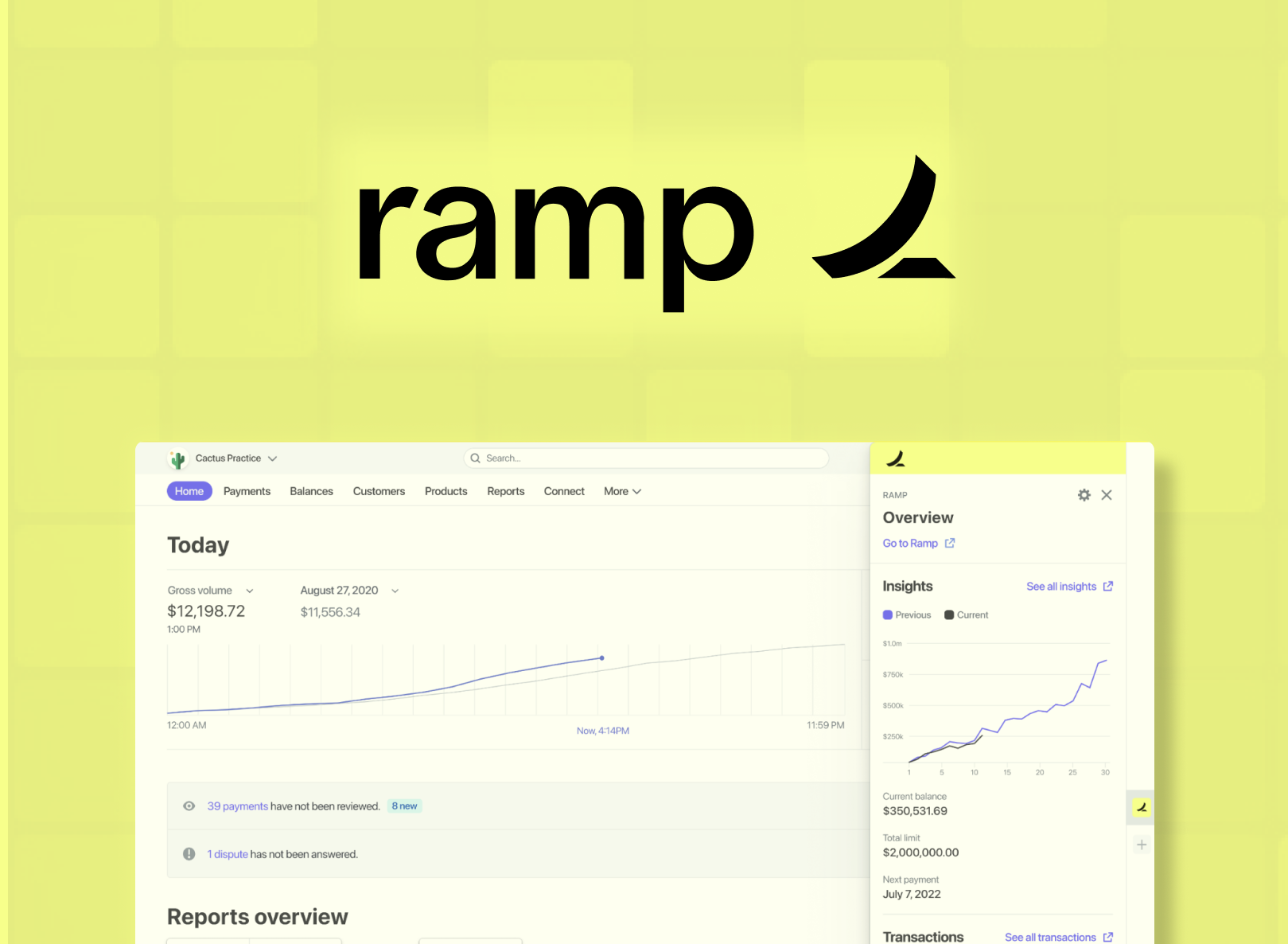

By moving SQL models for fraud detection from an analytics warehouse to Materialize, Ramp cut lag from hours to seconds, stopped 60% more fraud and reduced the infra costs by 10x.

Ryan Delgado Staff Software Engineer, Data Platform - Ramp

Build Faster with a Batteries-Included Platform

Materialize combines the power of streaming data with the scalability and extensibility of your favorite data warehouse.

Cloud-Native

Bring your team, data and SQL, we'll handle the infra.

Managed Connectors

Materialize handles the complexity of consuming data in real-time via Postgres, Kafka, and Webhook sources.

Modern Security

From AWS Privatelink compatibility, to RBAC, Materialize is built with security in the foundation.

SOC2 Compliant

Materialize is SOC 2 Type 2 Compliant.